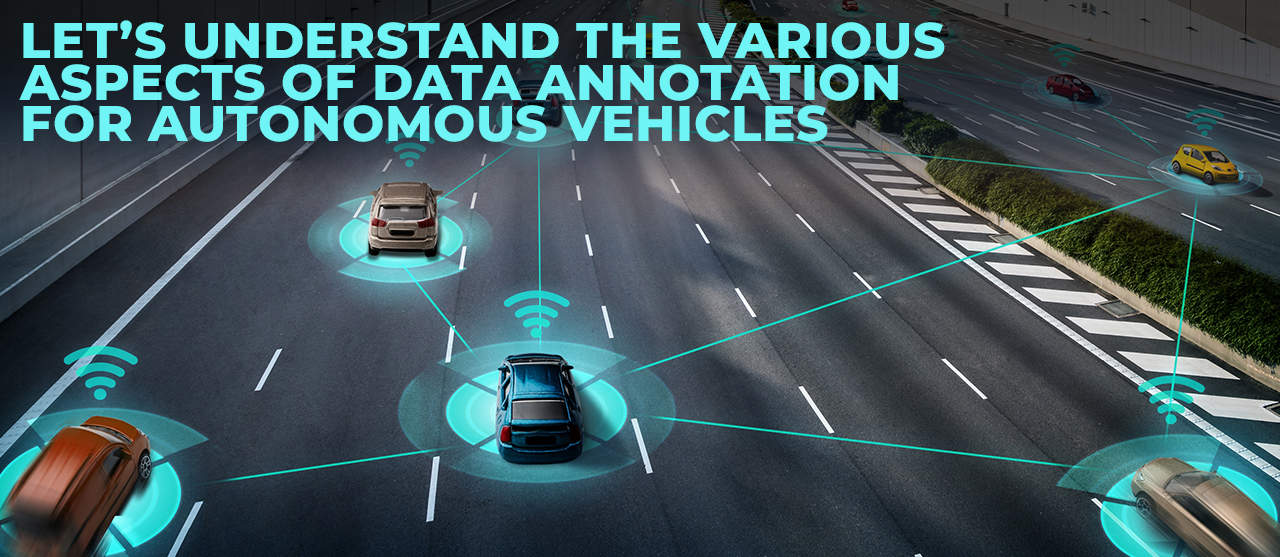

As autonomous vehicles (AVs) transition from prototypes to reality, one element stands out as the cornerstone of their success — data annotation. Behind every decision a self-driving car makes lies thousands of labeled images, videos, and sensor readings meticulously annotated to train advanced AI and machine learning (ML) models. Without accurately labeled data, even the most sophisticated algorithms cannot interpret the world with human-level precision.

Why Data Annotation Matters In Autonomous Driving

Building safe, intelligent, and scalable driverless vehicles requires high-quality annotated datasets that enable ML algorithms to understand visual cues, identify objects, and make split-second navigation decisions. According to Grand View Research, the global autonomous vehicle market is expected to reach $214.32 billion by 2030, growing at a CAGR of 19.9%, with data annotation playing a pivotal role in model training and validation.

A recent report (2025) highlights that AV application accounts for ~47% of the annotation services demand in roadway-AI models. With millions of miles of driving data generated daily through LiDAR, radar, and camera sensors, the need for structured, high-quality annotation is greater than ever.

Data Annotation And Labeling For Autonomous Vehicles: Explained

To make autonomous navigation possible, algorithms must be trained to detect, track, and classify a range of real-world objects — from vehicles and pedestrians to traffic lights and obstacles. This process depends on supervised learning, which relies heavily on accurately labeled data.

Training datasets typically include:

- Images and videos of urban and rural driving environments

- LiDAR point clouds for 3D scene understanding

- Object classes such as vehicles, pedestrians, traffic signs, road markings, and animals

Each of these elements is meticulously annotated to teach AV systems how to perceive their surroundings and make safe driving decisions in real-time.

The Role Of Data Annotation In Autonomous Vehicle Systems

1. Object Detection

Object detection is the foundation of any self-driving system. Through bounding boxes and polygonal annotation, vehicles can recognize nearby objects — cars, cyclists, pedestrians, or road debris — and react accordingly to prevent collisions.

2. Lane Detection

Precise lane detection is critical for maintaining lane discipline and preventing accidents. Annotated datasets help train models to identify lane boundaries, curvature, and road edges even in complex lighting or weather conditions. AI algorithms leverage color gradients, ridge filters, and deep CNNs to enhance accuracy.

3. Mapping And Localization

Accurate localization and mapping help AVs determine their exact position on the road. Annotated data supports HD map creation, landmark recognition, and environmental segmentation, enabling the vehicle to plan routes safely and efficiently.

4. Path Planning And Decision Making

Annotation also aids in motion prediction and trajectory planning. ML models trained with annotated sequences can analyze surrounding dynamics, forecast object movement, and plan optimal routes that balance speed, safety, and energy efficiency.

Key Types Of Data Annotation For Self-Driving Cars

| Annotation Type | Purpose |

| Bounding Boxes | Label vehicles, pedestrians, and obstacles for object detection and tracking. |

| Polygonal Segmentation | Capture complex object shapes to improve precision in diverse environments. |

| Semantic Segmentation | Assign every image pixel to a specific class (road, car, sign, etc.) for holistic scene understanding. |

| 3D Cuboids | Define the depth and dimensions of objects in LiDAR and camera feeds for accurate distance measurement. |

| Landmark & Key-point Annotation | Identify key object features such as joints, lights, or corners for detailed analysis. |

These annotation techniques collectively empower AV systems to interpret complex driving scenarios, ensuring safer and smarter navigation.

Accelerating Autonomous Vehicle Development With The Right Partner

As AV development accelerates, so does the need for large-scale, precise, and affordable training data. However, building and maintaining such datasets is complex, requiring domain expertise, human oversight, and automation.

That’s where EnFuse Solutions comes in.

We specialize in end-to-end data annotation services for autonomous vehicles, combining human intelligence with advanced automation tools. Our teams deliver:

- High-quality labeled datasets for object and lane detection

- Custom annotation workflows for multi-sensor data

- Quality assurance frameworks ensuring 99%+ accuracy

- Scalable solutions that adapt to growing data volumes

Partnering with EnFuse Solutions empowers automotive innovators to focus on AI development while we handle the intricate task of annotation — ensuring speed, accuracy, and cost efficiency.

The Road Ahead: Data Annotation Driving The Next Wave Of Innovation

The journey toward full autonomy is accelerating. With test vehicles logged ~9.07 million miles in California (Dec 2022–Nov 2023) on public roads under AV permits (California Department of Motor Vehicles (DMV) data), the demand for precise annotation will only grow. As vehicles become smarter and more connected, annotated data will remain the fuel that drives innovation in safety, performance, and user experience.

At EnFuse Solutions, we enable automotive pioneers to transform raw data into actionable intelligence — powering safer, smarter, and more efficient autonomous vehicles.

Talk to our experts today to accelerate your AV development with best-in-class data annotation services.

Tags